App Rescue

Fix My App Before It Costs More Time, Users, and Revenue

Focused debugging, refactoring, and stabilization for broken frontend or backend flows, fragile MVPs, and production issues that keep resurfacing.

When someone searches for fix web app or fix SaaS, the situation is usually already expensive. Releases are slipping, bugs keep coming back, customers are hitting inconsistent states, and nobody fully trusts the codebase anymore. At that point, the real priority is not adding another feature. The priority is stopping the product from leaking time, confidence, and momentum.

I help founders and teams stabilize broken apps, untangle brittle logic, and move prototypes toward production-ready behavior. That can mean debugging a specific failure, repairing a shaky backend flow, improving data handling, or reworking the parts of the app that keep turning simple changes into risky deployments. The work is practical: diagnose the issue, fix what matters first, and leave the product in a stronger state than before.

Who This Is For

Who this page is for

This page is for teams that already have software in the wild but no longer trust it. Sometimes the issue is a critical bug. Sometimes it is a product that technically works yet fights every release because the codebase has become too brittle.

It is especially relevant for founders who inherited an MVP, outsourced build, or half-finished product and now need someone to diagnose the real problem instead of stacking another patch on top of it.

Founders with a fragile MVP

You are not ready for a rewrite, but the current app keeps failing users or slowing the team down every time a small change is requested.

Product teams facing recurring bugs

The same issue keeps returning under different names because the root cause was never addressed at the workflow or data-model level.

Agencies or operators taking over a messy build

You need an outside engineer who can read through unfamiliar code fast, isolate the risky sections, and improve them without wrecking delivery timelines.

Businesses hit by performance or reliability issues

The app is technically live, but production confidence is low because slow flows, weak validation, or inconsistent state handling keep hurting the experience.

Problems Solved

Problems I solve when an app is already unstable

Most unstable apps do not fail because one bug slipped through. They fail because there is no reliable shape to the system. A rushed MVP grew without guardrails. Critical flows were never hardened. Error handling is thin, state management is confusing, and every patch makes the code a little more unpredictable.

That pattern shows up in early SaaS products, internal tools, and client portals all the time. The labels change, but the underlying pain points are familiar.

Features work only in the happy path

A flow may look fine in the demo account and still break for real users because loading states, invalid inputs, race conditions, retries, and permissions were never modeled carefully. That is how unstable apps survive until production traffic exposes the gaps.

The backend is technically alive but operationally fragile

Endpoints return data, but the surrounding logic is brittle. Validation is inconsistent, database assumptions are shaky, and one edge case can leave records out of sync. Teams feel this as random bugs. The real issue is weak system discipline.

Nobody can debug quickly anymore

When naming is poor, logic is tangled, and responsibility is spread across too many files or layers, fixing even a medium-sized issue becomes slow. The codebase starts resisting change, which makes every new request feel heavier than it should.

Prototype decisions are now blocking growth

What was acceptable for the first launch becomes dangerous once users depend on the product. Payment flows, onboarding, access control, notifications, and reporting all need more discipline than an initial prototype usually gets.

What I Help With

How I approach app rescue and stabilization

Fixing a broken app is not just about finding the line that throws an error. It is about identifying the smallest set of technical changes that meaningfully improves reliability. Some problems need a targeted patch. Others need a cleaner boundary, a schema adjustment, or a safer workflow between frontend and backend.

I focus on restoring control first. That means reproducing failures, locating the real source of instability, and tightening the parts of the system that keep spreading risk. When possible, I do that without forcing a rewrite. When a rewrite is justified, I can explain exactly why, what portion needs to change, and how to reduce disruption while doing it.

Debug from symptoms back to structure

I treat bugs as signals, not isolated surprises. If the same class of issue keeps appearing, there is usually a structural cause beneath it. Fixing that root cause protects the product better than stacking temporary patches.

Stabilize the highest-risk flows first

Login, onboarding, data creation, payments, application submission, and admin actions usually deserve priority over cosmetic cleanup. I prefer making the product trustworthy before making it pretty.

Turn prototypes into production-capable systems

Prototype to production work often means better validation, clearer state handling, safer database operations, improved feedback for users, and deployment decisions that do not break under normal growth.

Keep the repair path realistic

A rescue project should reduce chaos, not create more of it. I aim for fixes that are understandable, reviewable, and compatible with how the product needs to evolve next.

How I Work

A rescue workflow built for speed and control

When an app is unstable, long discovery phases are frustrating. I move quickly, but not blindly. The process is designed to surface the real issue fast and create a repair path that a business can actually live with.

Step 1: Reproduce the failure and narrow the blast radius

I start by understanding which flows break, for whom, and under what conditions. That helps separate urgent production failures from noisy but lower-risk issues. A good rescue starts with disciplined triage.

Step 2: Inspect the workflow end to end

I trace the issue across UI state, API requests, validation, database writes, and side effects. Broken apps often fail between layers, so looking at only the frontend or only the backend usually misses the real cause.

Step 3: Patch the issue and harden the surrounding logic

A minimal fix is useful only if it stays fixed. I usually pair the main repair with the small structural cleanup needed to prevent recurrence, whether that is a data constraint, clearer conditional logic, or better error paths.

Step 4: Leave the app easier to reason about

The product should be more maintainable after the rescue than before it. That can mean cleaner code boundaries, simpler flow ownership, or documentation around the exact problem area so future changes are safer.

Case Study

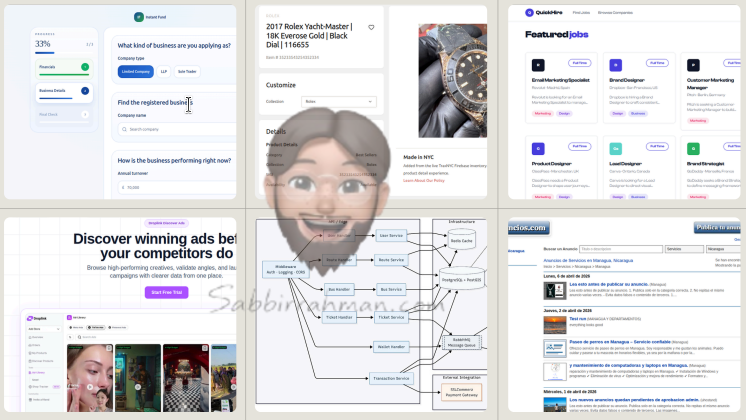

QuickHire: an example of building reliability into a complex workflow

QuickHire matters here because it includes the kinds of flows that become fragile fast when they are implemented carelessly: geolocation, job posting, company profiles, candidate applications, guest access, and public tracking. Those are not simple brochure-page interactions. They are stateful product workflows where weak backend or UI decisions show up quickly.

The platform was built as a UK geolocation-based hiring marketplace with React, Node.js, and PostgreSQL, and it was planned to support monetization-ready backend logic from the beginning. That meant the data model and service design had to think beyond the first demo. It is a good example of the kind of product work where fixing SaaS issues later is much easier if the early build respected real operational constraints.

The same pattern applies in more operational systems too. The Early Warning Alert System for Disease Outbreaks had to combine monitoring, prediction, mapping, notifications, and reporting in one interface, which is exactly the kind of environment where weak architecture becomes painful fast. TraxNYC is another good proof point because premium storefront quality only works if fulfillment, offer flows, and support tooling stay stable behind the scenes.

QuickHire at a glance

- UK geolocation-based hiring marketplace with multiple user journeys, not a single landing page funnel.

- Job posting, applications, company profiles, guest access, and tracking flows designed as connected product logic.

- Monetization-ready backend prepared for subscription and lead unlock models instead of a dead-end prototype.

- Built with React, Node.js, and PostgreSQL to support both speed of delivery and stronger long-term maintainability.

Browse shipped work

See the broader project archive for marketplaces, internal tools, ecommerce builds, and operations systems.

Read the QuickHire case study

Open the project detail page for the UK hiring marketplace used as proof throughout this page.

See Early Warning Alert System

A monitoring and alert platform where reliability mattered across dashboards, mapping, notifications, and reporting instead of only on a single screen.

See TraxNYC

A commerce build that paired premium UX with operational stability so merchandising and support teams could move without engineering bottlenecks.

Why Choose Me

Why clients bring me into rescue work

Rescue projects are different from greenfield builds. They need calm diagnosis, strong technical judgment, and the ability to improve a codebase without pretending it was well-planned from day one. I am comfortable working in that messier environment because the goal is not perfection. The goal is renewed control.

I also do not hide behind a long audit if the product needs action now. I want enough context to make good decisions, but I bias toward meaningful fixes, visible progress, and reducing business risk quickly.

I can work inside an existing codebase

Not every problem deserves a rewrite. I am comfortable reading through someone else's structure, identifying pressure points, and improving the system without unnecessary disruption.

I think in product terms, not just bug terms

The most urgent issue is not always the loudest one. I prioritize the parts of the app that affect user trust, revenue, or team operations first, because that is where technical work produces the biggest business relief.

I communicate clearly during uncertainty

When the app is unstable, people need clarity more than optimism. I explain what is known, what is likely, what needs validation, and what the repair path should be so the next step feels grounded.

I leave the app in a stronger state

A rescue should not create a new dependency on the person who fixed it. I aim to improve readability and structure so future development gets easier rather than more specialized.

FAQ

Questions about fixing a broken app

App rescue work usually starts with uncertainty, so these are the questions clients tend to ask before we decide whether a fix, stabilization sprint, or deeper refactor is the right move.

Can you fix my app without rewriting the whole thing?

Usually yes. I start by identifying the smallest set of changes that reduces the biggest risk. If a larger rewrite is truly necessary, I explain why and what portion should change first.

What kinds of issues do you usually handle?

Common rescue work includes broken user flows, unstable APIs, poor validation, tangled state, performance issues, deployment problems, and prototypes that cannot safely handle real usage.

How do you approach an unfamiliar codebase?

I reproduce the failure, trace the workflow end to end, isolate the high-risk layers, and then patch and harden the surrounding logic so the same issue is less likely to return.

Call To Action

Need to fix a broken app or unstable SaaS product?

If your product feels fragile, slow to change, or one release away from another incident, we can look at the real problem and decide whether it needs a focused patch, a stabilization sprint, or a prototype-to-production cleanup.

The fastest useful move is usually not adding more features. It is making the existing product trustworthy again.